Building blocks of a data-driven organization

April 19, 2021

What’s a data-driven organization ?

A data driven organization understands the value of data and uses its customer and operational data to achieve improved profitability and get a competitive advantage.

What differentiates a data-driven organization is its ability to easily find and use reliable data to answer a business question or develop a new product.

By building a portfolio of data assets, a data-driven company sets the stage to launch various data & analytics initiatives. With a proper strategy, businesses will maximize the value generated by these initiatives.

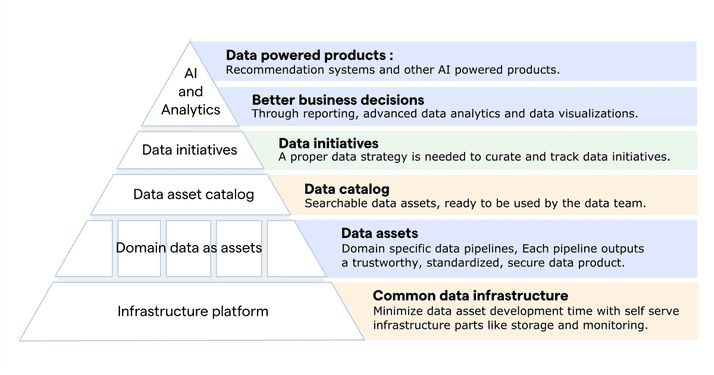

The following diagram describes the organizational components that are needed to build a data-driven enterprise. These blocks do not need to be implemented in order. Different parts can be started separately, but a data-driven enterprise needs all of these blocks.

One of the key components of this diagram are data assets.

What are data assets ? and what role do they play in a data-driven organization ?

Data assets are the building blocks on which data-driven companies rely to build new products, provide more value to customers and optimize all parts of the business.

At Uber, an example of a data asset would be a real time event stream of driver statuses per area. It would be owned by a “driver” data domain team.

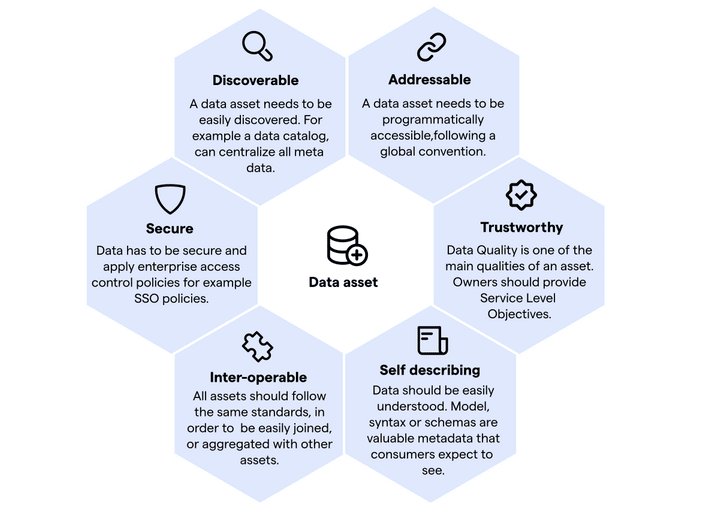

The team will design the data pipeline that generates the data and provide it with multiple qualities that will turn it from regular data into a data asset. Below are the qualities enumerated by Zhamak Dehghani in her article about Distributed Data Mesh

A data asset is :

- Discoverable : A data asset needs to be easily discovered. For example a data catalog, can centralize all metadata.

- Addressable : A data asset needs to be programmatically accessible,following a global convention.

- Trustworthy : Data Quality is one of the main qualities of an asset. Owners should provide Service Level Objectives.

- Self describing : Data should be easily understood. Model, syntax or schemas are valuable metadata that consumers expect to see.

- Inter-operable : All assets should follow the same standards, in order to be easily joined, or aggregated with other assets.

- Secure : Data has to be secure and apply enterprise access control policies for example SSO policies.

In 2016, a consortium of scientists in Europe also introduced similar principles called FAIR data principles (Findable, Accessible, Inter-operable, Reusable)

A data asset is a valuable product, that, “as any technical product, needs to delight its consumers, in this case data engineers, ML engineers or data scientists” (Zhamak Dehghani)

Once the data asset is available, it’s ready to be used by other data domain teams to create new data assets, or used for business analytics and machine learning initiatives.

In the case of the driver status real time indicator, the data asset can be used by developers to unlock an essential feature which is providing the customer with a real time indicator of available drivers in his area.

Data pipeline are pieces of infrastructure that can get very complex. They often need the same capabilities. How can a data-driven organization do to optimize engineering and managing these assets ?

Infrastructure platform in the service of data asset development

In order to provide data assets with features like data catalog discoverability, data quality assessment and minimize the time to production, data engineers need a set of common capabilities. By building a platform team whose role is to provide these infrastructure pieces, It’s possible for data-driven organization to optimize the development effort.

The infrastructure platform provides :

- Scalable storage : Data assets can have different formats. Some data assets are real-time streams, others are static files. Storage choice should be a non-brainer for data engineers. The infrastructure platform will provide these storage options.

- Data versioning : With versioning, data engineers can easily provide answers to questions like : What changed in the data ? When ? and who made the change ? An infrastructure platform can automate data versioning and provide engineers with a versioning API.

- Data privacy and compliance : The data platform can provide a set of features to make the data compliant with privacy regulations like GDPR or CCPA. Example : Data anonymization, personal data detection or automating data retention policies.

- Data lineage and observability : With data lineage, we can provide answers to questions like: Where did the data come from ? How is it created ? what data processing steps did it go through ? Using distributed tracing and code instrumentation, the infrastructure platform will automate data lineage.

- Data pipeline monitoring/alerting/log : Debugging data pipelines can be a difficult task because of the complexity of the architecture. The infrastructure platform provides data engineers with monitoring tools to manage and maintain data assets.

- Data quality metrics : In order to build trustworthy data assets, the infrastructure platform can provide automated features for statistical analysis of data. Example: Distribution of data, missing data ratios… These metrics can be used to define service level objectives (SLO).

- Access controls : Company access controls are globally defined. All data assets should use the same rules in order to provide secure assets. The infrastructure platform will enforce these global access controls.

- Data pipeline implementation and orchestration : Tools to build and orchestrate data pipelines.

- Data discovery, catalog registration : The infrastructure platform also provides the tools to make the data discoverable and its metadata available to data asset users.

Conclusion

In this article, we talked about technical and organizational components of a data-driven organization. But becoming a data-driven company is no easy task, especially for established companies. It needs thoughtful planning and solid sponsorship from leaders, who need to develop a data culture at all levels of the organization.

References:

- Distributed Data Mesh by Zhamak Dehghani